Why is the granularity returned by the Linux driver so low?

Submitted by Ambrose on Tue, 2016-11-22 10:52

The problem? Linux’s granularity of the read is way too low.

In MacOSX, the sample program returns numbers in at least the hundreds range. I can see the numbers change if I try very hard to cover where I think the sensors are. I get nonzero readings even in late afternoon when the room is nearly dark. In MacOSX, the light sensors felt super sensitive.

In Linux, the kernel returns an ordered pair like (12, 0) if I turn on my bright spotlights. If you put a piece of tape over the the webcam like a lot of people do, you get something more like (9, 0).

First disturbing thought: No matter what the ambient lighting looks like, Linux gives you zero for the right-hand-side sensor.

Even more disturbing: If I turn on just my regular 60W light I get all zeroes. From Linux’s point of view there’s no difference between no ambient light at all and having about 800 lumens dispersed in a small room. Heck, I get zero even during the day, right next to a window (so I live in an apartment – we get crappy lighting in apartments, but still). Compared to MacOSX, in Linux the light sensors seem so insensitive they are practically useless.

If we get zero even during the day, what point is reading from the light detectors? I can’t even tell night from day, or a darkened lecture hall from a brightly-lit classroom.

Short of reading the kernel source code is there even a way to figure out why granularity is so low?

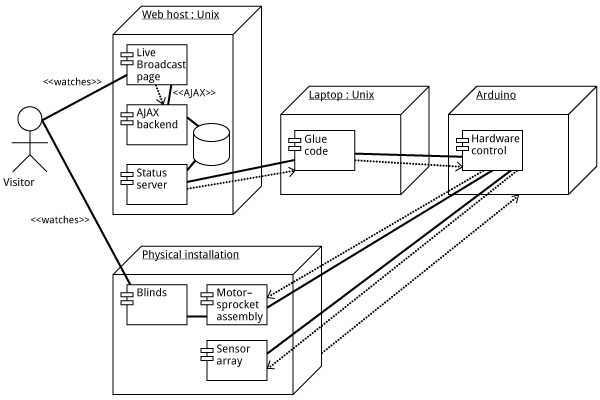

How should we even describe this as an “alt text”? (Let’s ignore text browser users for the moment.) Describing the picture certainly wouldn’t work; what matters are not the visual elements themselves, but their relationships to each other.

Even worse: Imagine this being exported into PDF (or SVG, or EPS), then embedded into InDesign. Suppose the InDesign file is going to ultimately end up as an accessible PDF. But the text in the diagram is going to be a jumbled mess. So what accessibility are we talking about? Are we deluding ourselves?

This has serious implications: Imagine, for example, a piece of online instructional material full of such diagrams. Under the AODA organizations are supposed to be able to supply this in an “accessible format.” What does it even mean for this to be accessible?

How should we even describe this as an “alt text”? (Let’s ignore text browser users for the moment.) Describing the picture certainly wouldn’t work; what matters are not the visual elements themselves, but their relationships to each other.

Even worse: Imagine this being exported into PDF (or SVG, or EPS), then embedded into InDesign. Suppose the InDesign file is going to ultimately end up as an accessible PDF. But the text in the diagram is going to be a jumbled mess. So what accessibility are we talking about? Are we deluding ourselves?

This has serious implications: Imagine, for example, a piece of online instructional material full of such diagrams. Under the AODA organizations are supposed to be able to supply this in an “accessible format.” What does it even mean for this to be accessible? “Old media requires lubrication.”

This was one of the answers to the fake questionnaire I was given at Night Kitchen during last year’s Nuit Blanche. Back then the answer didn’t really make much sense to me, and in fact I thought the answer was bizarre. But of course, I hadn’t been involved in any “old media” creation that would have required lubrication.

Imagine how I felt when I had left the installation turned on for the night and then discovered bits of the sprocket wheel on the wooden frame. I was so glad the wheel had not been destroyed.

So I guess I can now sympathize with that answer to that fake question: Old media does require lubrication. It probably requires daily lubrication, even. But does that mean our installation, with such a strong electronics component, is still “old media”? So “new media” is virtual only? I don’t know if I can side with this conclusion, yet.

“Old media requires lubrication.”

This was one of the answers to the fake questionnaire I was given at Night Kitchen during last year’s Nuit Blanche. Back then the answer didn’t really make much sense to me, and in fact I thought the answer was bizarre. But of course, I hadn’t been involved in any “old media” creation that would have required lubrication.

Imagine how I felt when I had left the installation turned on for the night and then discovered bits of the sprocket wheel on the wooden frame. I was so glad the wheel had not been destroyed.

So I guess I can now sympathize with that answer to that fake question: Old media does require lubrication. It probably requires daily lubrication, even. But does that mean our installation, with such a strong electronics component, is still “old media”? So “new media” is virtual only? I don’t know if I can side with this conclusion, yet.